Products

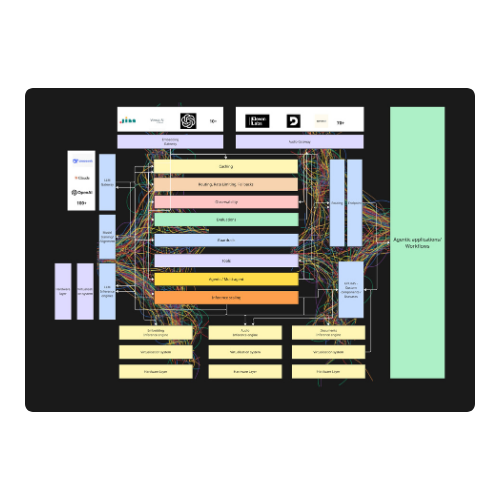

Bud AI Foundry

The all-in-one control panel for enterprise GenAI. A unified platform to experiment, build, scale, and consume private and cloud AI models and agents.

Multi-modal Inference

Model Management

Guardrails

Observability

Bud Sentinel

AI guardrails powered by Resource Aware Attention. Faster on any CPU than competitors on a $15K GPU with industry-leading accuracy.

CPU-Optimized

Low Latency

High Accuracy

Edge Ready

Bud Models

Domain and use-case specific finetuned models. Optimized for performance across various industries and applications.

Domain-specific

Fine-tuned

Optimized

Production-ready

Bud SENTRY

Zero-trust model ingestion framework integrated within Bud Runtime. Protects against supply chain attacks with sandboxed evaluation, deep scanning, and continuous runtime monitoring.

Zero-Trust Ingestion

Sandboxed Evaluation

Malware Scanning

Runtime Monitoring

Bud FCSP

Full-stack AI compute and service platform. End-to-end infrastructure for deploying, scaling, and managing AI workloads in the cloud.

Cloud Infrastructure

Scalable Compute

AI Workloads

Managed Services

Bud Latent

Advanced latent space exploration and representation learning. Unlock deep insights from your data with state-of-the-art embedding and feature extraction.

Latent Space

Embeddings

Feature Learning

Data Insights

Resources

Solutions

Bud For Cloud Service Providers

Transform from bare metal provider to AI-first cloud platform. Enable Model-as-a-Service, Token-as-a-Service, and AI PaaS offerings with enterprise-grade infrastructure.

Model-as-a-Service

Token-as-a-Service

AI PaaS

Sovereign AI

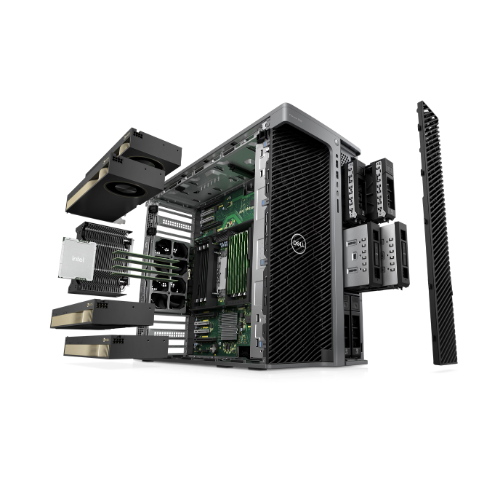

Bud For Original Equipment Manufacturers

Ship AI-native devices that work out of the box. Pre-integrated AI stack with zero-config deployment, OTA updates, and enterprise security built in.

AI-in-a-Box

Zero-Config Deploy

OTA Updates

Edge Security

.png)