Here is a number worth sitting with. According to McKinsey’s 2025 State of AI survey across nearly 2,000 executives and 105 countries, 88 percent of organizations now use AI regularly in at least one business function. That sounds like progress. It is not the full story.

Only 6 percent of those organizations qualify as AI high performers — firms that attribute more than 5 percent of EBIT to AI and describe themselves as capturing significant value. The remaining 94 percent are running AI. They are not transforming with it.

- 88% of enterprises use AI regularly in at least one business function

- 6% qualify as AI high performers generating measurable EBIT impact

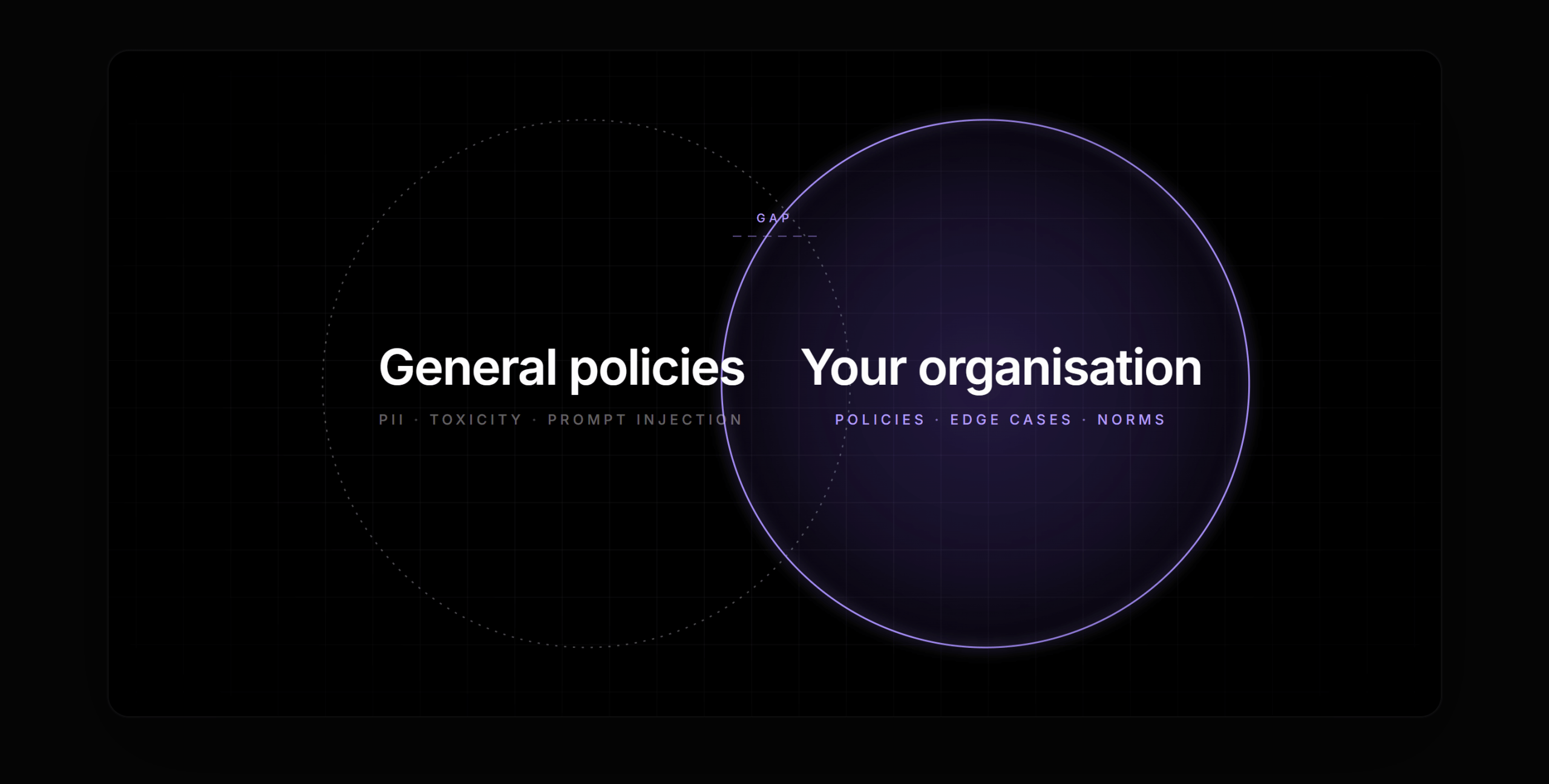

The gap is not about access to better models. It is not a cloud budget problem. The organizations at the top of that 6 percent have something the other 94 percent do not — and it is not technology. It is architecture.

Which brings us to a term that is used extravagantly and defined poorly: AI-native. Marketing materials apply it to anything with a language model attached. That is not what it means. The distinction matters, because confusing the two leads organizations to make the exact investments that keep them stuck in the 94 percent.

An AI-enabled enterprise adds AI to existing workflows. An AI-native enterprise redesigns operations around AI as a foundational layer.

What AI-Enabled Actually Looks Like

An AI-enabled organization has added AI capabilities to existing applications and workflows. There is a chatbot on the support portal. There is a Copilot integrated into the productivity suite. There is a model running in the background of one or two internal tools.

The underlying operating model is intact. Humans still perform most steps. AI provides assistance or surfaces a recommendation at defined trigger points. Each deployment is scoped to a single application or dataset. The value is real — but it is bounded, because the system was never designed around AI. AI was added to a system designed without it.

This is the default state for most enterprises today. It is also the architectural trap that explains the 94 percent.

What AI-Native Actually Looks Like

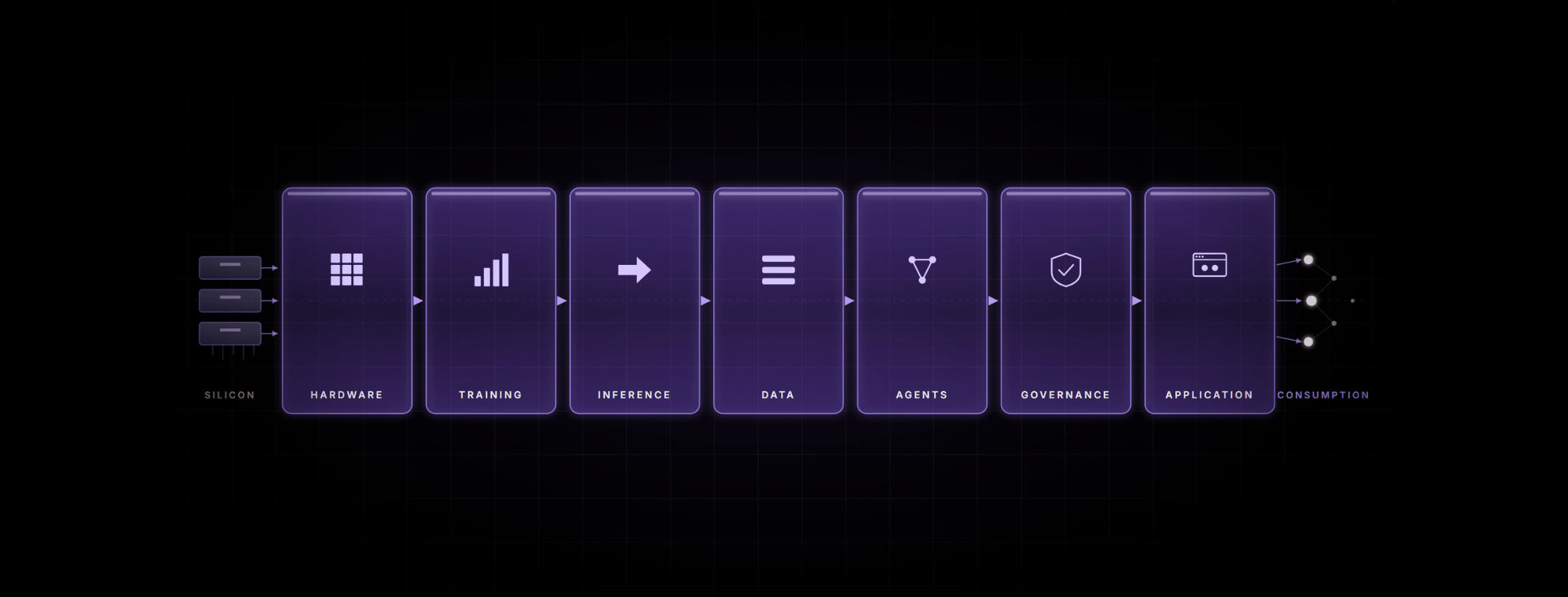

An AI-native enterprise is architecturally different at its foundation. Operations are redesigned around AI as a core layer — embedded in systems, processes, and decision pathways from the ground up, not retrofitted onto them.

Data is governed with the same rigor applied to financial assets. AI agents orchestrate end-to-end workflows, with humans engaged at exception-handling and high-stakes approval steps. Infrastructure is managed as a financial instrument with active utilization targets and unit-economics visibility — not as an IT budget line that gets reviewed quarterly.

McKinsey’s research makes the contrast measurable. High-performing organizations are 3.6 times more likely to be targeting transformative, enterprise-level change rather than incremental efficiency improvements. And 55 percent report fundamental process redesign when deploying AI — compared to roughly 20 percent of their peers. Among the 25 attributes McKinsey tested for correlation with EBIT impact, workflow redesign ranked first.

The Six Dimensions Where It Shows Up

The difference between AI-enabled and AI-native is not a single decision. It shows up across six operational dimensions. Here is what each looks like in practice.

| Dimension | AI-Enabled | AI-Native |

| Workflow Design | AI features added to existing processes. Humans perform most steps with AI assistance at defined trigger points. | Operations redesigned around AI agents. Humans engaged at exception-handling and high-stakes approval steps only. |

| Model Strategy | Single-model deployments per application. Difficult to govern at scale and costly to expand. | Governed portfolio of LLMs, SLMs, and domain models — routed by policy, cost, latency, and accuracy requirements. |

| Data Architecture | Siloed retrieval, fragmented lineage, ad-hoc access decisions made at deployment time. | Curated data products with privacy-by-design, full auditability, and lineage tracking built in from the start. |

| Governance | Manual review cycles, inconsistent controls, compliance added as a final check before release. | Model registry, evaluation gates, guardrails, and incident playbooks built into the platform layer itself. |

| Infrastructure | Over-provisioned, high CapEx, average GPU utilization sitting below 40 percent. | Heterogeneous virtualization, hybrid inference, multi-cloud portability — targeting 70–90% utilization. |

| Economics | Unpredictable API spend, high OpEx, low infrastructure ROI from underutilized capacity. | Predictable TCO with hybrid SLM/LLM routing. On-premise payback typically within six months. |

Why the Distinction Is Urgent Right Now

Three forces are compressing the window for getting this right.

First, agentic AI — systems capable of autonomous planning and multi-step execution across tools and workflows — is arriving at roughly 44 percent annual market growth. The architectural decisions being made today will determine whether organizations can safely benefit from it. Those without the right data, governance, and infrastructure foundations will not be able to operationalize agentic systems when the time comes. The infrastructure gap compounds.

Second, infrastructure economics are increasingly penalizing the AI-enabled approach. A single 8×H100 cloud GPU cluster costs between $788,000 and $963,000 per year. On-premise equivalents typically break even within six months. Most enterprises are running average GPU utilization below 40 percent — meaning the hardware they already own is sufficient. The gap is operational, not capital. AI-native organizations are capturing that value; AI-enabled organizations are paying for compute they are not using.

Third, 60 to 70 percent of enterprise inference traffic is basic enough to be served by small language models at $0.20 to $0.50 per million tokens. Most organizations pay large language model rates for all of it. That is a structural cost inefficiency that AI-native operations eliminate by design — through intelligent routing, not manual intervention.

The Differentiator Is Not the Model

The 6 percent who are generating real returns from AI do not have access to better models than the 94 percent. They have better execution discipline. They have redesigned workflows rather than augmented them. They have built governance in rather than bolting it on. They have treated infrastructure as a financial asset rather than an IT line item.

Becoming AI-native is not a technology acquisition. It is an architectural commitment — one that requires organizational will as much as technical capability. The organizations that make it now will compound that advantage as the technology matures. Those that continue bolting AI onto legacy workflows will find the catch-up cost, measured in capital, talent, and competitive position, increasingly prohibitive.

The first step is simply being precise about which one you are building.

Want the full framework?

This post draws from Bud Ecosystem’s white paper, Transforming to an AI-Native Enterprise — a practitioner’s guide covering the business case, implementation playbook, KPI framework, and executive action list for organizations ready to move from AI-enabled to AI-native.

.png)